🚧Enterprise AI: The Unseen Hurdles

🤖🎩 Welcome, caffeinated AI enthusiasts! Here’s another steaming hot edition of The AI Espresso! Today we dive into an eclectic mix of AI marvels that’ll keep your adrenaline pumping:

🖼️ AI-Generated Media of the Week

📰 AI News

🚀 Engineering: Web Development

🎨 Art & Design: UX Research

💼 Business: Operations (ChatGPT Code Interpreter)

🚀 AI Development - Technical: Evaluators

Tap that subscribe button below for more The AI Espresso!

🖼️ AI-Generated Media of the Week

📰 AI News

ChatGPT Empowered Conversations: OpenAI's ChatGPT now includes custom instructions, letting users define rules and goals, and provide feedback for continual improvement. This versatile feature expands its use across sectors and allows for tailored requests and fine-tuned responses.(🚀 Explore Now)

Generative AI Enterprise Hurdles: A study by ClearML and AI Infrastructure Alliance (AIIA) has brought to light the challenges Fortune 1000 enterprises face in adopting generative AI. According to the study, 59% of C-suite executives feel constrained by limited resources and budget, impeding the realization of the potential value of AI. A striking 66% of participants can't fully assess the impact and return on investment (ROI) of their AI/ML projects, pointing out issues with underfunded, understaffed, and under-governed AI, ML, and engineering teams. (🔍 Discover Insights)

🚀 Engineering: Web Development

AI Tools to Boost Your Game: Ever sketched a web design on a napkin and wished for a magical conversion into code? Your digital genie has arrived! Uizard is a rapid prototyping tool, AI-powered to whisk your hand-drawn sketches into fully-fledged high-fidelity prototypes. Develop prototypes for landing pages, productivity applications, iOS mobile, and SaaS web applications. Using cutting-edge computer vision and machine learning, it cooks up coded boilerplates in HTML & CSS, React, or Android. Time-saving, error-slashing, and absolutely wizardry!

Engineering Prompt of the Week: “How can I use data-driven design to optimize my website’s performance?” (See Full Example)

🎨 Art & Design: UX Research

AI Tools to Boost Your Game: Optimal Workshop utilizes AI to assist with information architecture research, card sorting, and tree testing, providing valuable insights into how users navigate and understand website structures

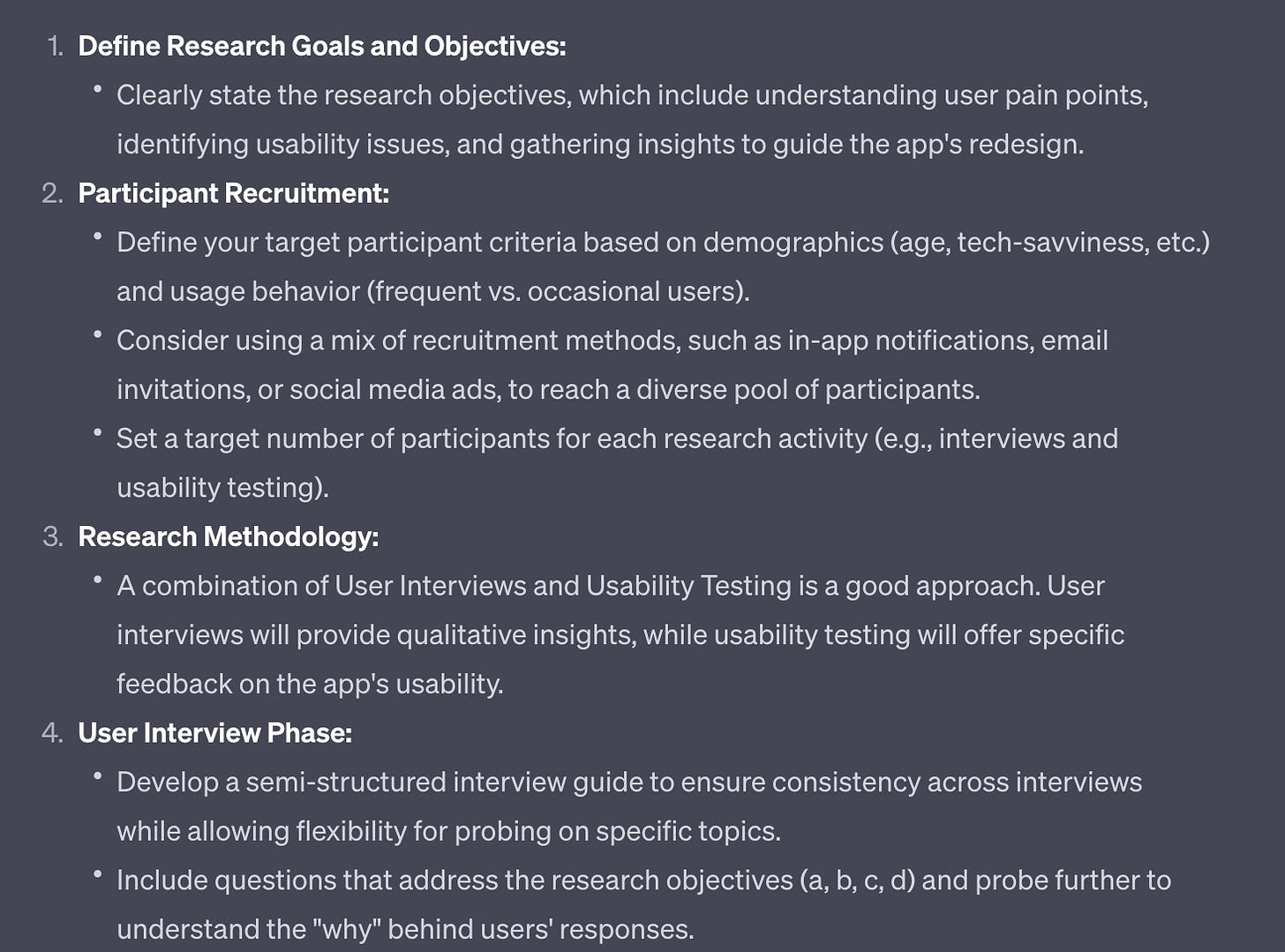

Art & Design Prompt of the Week: “Hi ChatGPT, I'm working on planning a UX research study and need assistance with the study design. Could you please help me with generating ideas and providing a structured plan for conducting an effective research study?…” (See Full Example)

💼 Business: Operations

AI Tools to Boost Your Game: Take your data handling to the next level with OpenAI’s ChatGPT Code Interpreter. This powerful plugin bolsters ChatGPT's abilities, allowing it to formulate and execute code in natural language. Whether it's data examination or file transformations, this tool has got you covered. Moreover, it can handle file uploads and downloads, letting you interact directly with various data files including images, videos, and more. It's versatile too, supporting a wide range of file formats like CSV, JSON, and others. Get ready to revolutionize your data operations! 📈

Business Prompts of the Week: “I have a data analysis task that I need your help with. I have a set of operations data and I need to create a report. Here are some specifics: “ (See ChatGPT’s Code Interpreter Example)

🚀 AI Development - Technical: Evaluators

AI Projects of the Week:

LangChain’s Evaluator: LangChain's Evaluator page presents a variety of tactics to assess the performance of LLM outputs. These include string-based, trajectory-based, and comparison-based evaluation methodologies. The choice of approach depends on the specific goals and data at hand.

Eleuther AI’s LM Eval: lm-eval is an open-source repository offering a unified framework for evaluating LLM output across diverse Natural Language Understanding (NLU) tasks. This package streamlines the implementation of QA on task-specific flows.

💡 Insights:

Evaluating LLM output is a complex challenge with multiple interpretative and solution avenues. A simple method involves comparing the output against a test set (string-based), applicable when fixed outputs are expected from the model. In contrast, comparison-based evaluation allows for more creativity in calculating perplexity scores, leveraging information theory concepts to assess model performance.

Example of Perplexity Scoring. (Source: AIMultiple) Trajectory-based methods are akin to feedback mechanisms in the Agent system, where another LLM evaluates each step taken by the main agent. This approach proves particularly valuable when quantitatively assessing agents, as they can be challenging to evaluate through traditional means.

That's all for now, technology explorers! Don't hoard this knowledge; click the share button below and disseminate your passion for AI! 🤖☕